AI Infrastructure Economics 2025: Building Sustainable, Scalable Systems That Don't Break Your Budget

The $945 Terawatt-Hour Problem: Why Your AI Budget Is About to Explode

The European Commission's latest analysis reveals that data centers consumed 2.6% of global electricity in 2023, with AI workloads now representing 60% of that consumption. Project this forward: data centers will consume 945 TWh annually by 2030—a 165% increase from 2025 levels.

For enterprises deploying production AI systems, this trajectory creates a harsh economic reality: the infrastructure costs of AI can exceed the value delivered if not architected strategically.

The Math That Breaks Most AI Budgets

Consider a typical enterprise deploying production AI:

Cloud-Only Approach (Traditional):

- GPU compute (A100/H100): $2.50-4.00 per hour

- Running 24/7 for inference: $730-1,460 monthly per GPU

- 10 GPUs for production capacity: $7,300-14,600 monthly

- Annual infrastructure cost: $87,600-175,200 for compute alone

- Add: Storage ($1,200/month), networking ($3,000/month), backup ($800/month)

- Actual annual cost: $130,000-220,000 for basic infrastructure

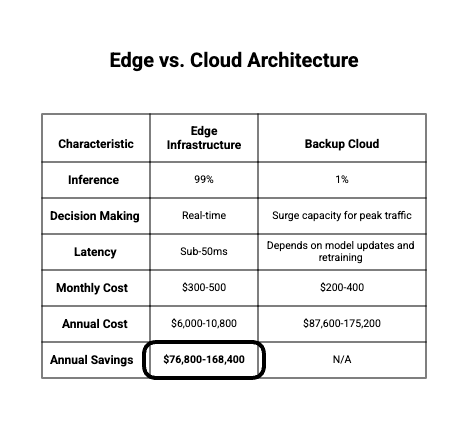

Edge + Hybrid Approach (Strategic):

- Edge deployment on NVIDIA Jetson: $500-2,000 one-time

- On-premises inference compute: $100-400 monthly

- Cloud backup/overflow capacity: $1,500-3,000 monthly

- Annual infrastructure cost: $20,000-50,000

The Delta: $80,000-170,000 annual savings through architectural optimization

For enterprises with 20+ production AI systems, this difference scales to $1.6-3.4M in annual infrastructure savings—money that could fund innovation, market expansion, or shareholder returns.

Why Enterprise AI Infrastructure Costs Spiral

IBM's research into hidden AI costs identifies three cost escalation patterns:

- Inefficient Model Deployment - Full models deployed when 70% of use cases only need 20% of model capability

- Redundant Infrastructure - Separate compute resources for each AI system rather than shared orchestration

- Poor Resource Utilization - Batch processing and always-on deployments when event-driven processing would suffice

The Infrastructure Crisis: What Companies Without Strategic Planning Face

The Compliance & Energy Challenge

The European Union's recently enacted AI Act now includes Article 44 requirements for high-risk AI systems: organizations must establish governance for "technical documentation" and "transparency," which requires extensive logging and monitoring infrastructure.

Hidden Compliance Costs:

- Audit logging infrastructure: $500K-2M setup, $100K+ annually

- Monitoring and observability platforms: DataDog, Splunk, Elastic $50K-200K annually

- Compliance automation tools: $30K-100K annually

- Security infrastructure (encryption, key management): $50K-150K annually

Total Annual Compliance Overhead: $230,000-450,000 for enterprises

Companies that budgeted only for model training and inference suddenly face 2-3x higher infrastructure costs when compliance requirements emerge post-deployment.

The Environmental & Regulatory Risk

Emerging Financial Risk:

- European carbon tax: €80-120 per ton CO2 (expanding globally)

- For a data center consuming 10 MW: approximately €200K-400K annually in carbon taxes

- ESG rating penalties: 5-15% stock valuation reduction for high-carbon operations

- Supply chain restrictions: Customers now selecting vendors based on carbon footprint

Organizations deploying energy-inefficient AI infrastructure face not just operational costs but also regulatory penalties, supply chain exclusion, and valuation pressure.

The Solution Architecture: Production-Grade, Cost-Effective, Sustainable

Pillar 1: Right-Sizing Compute Resources

The Model Segmentation Strategy

Not all AI tasks require frontier large language models. Research shows that 70% of enterprise AI tasks can be handled by models with 70% fewer parameters.

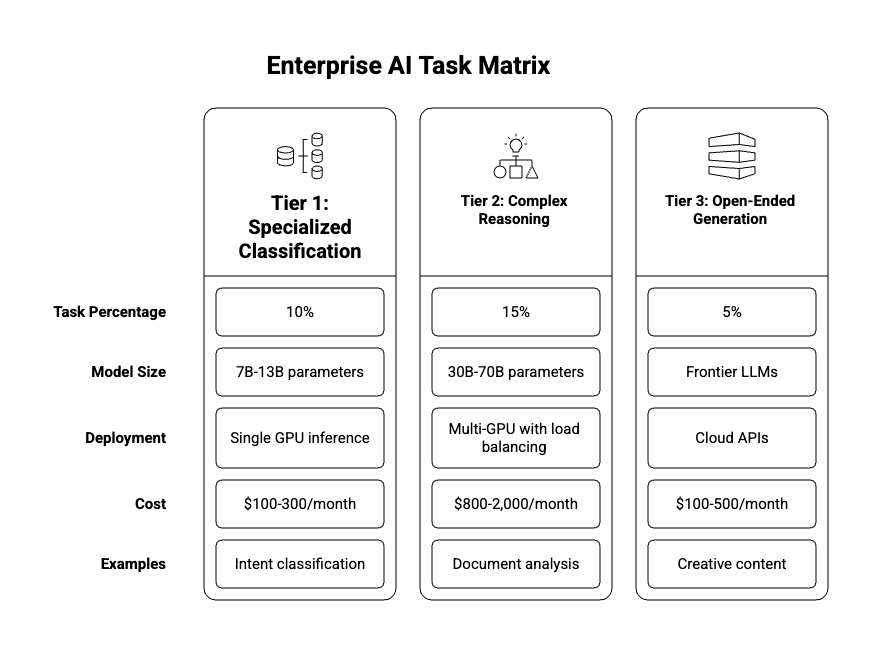

Task Classification Framework:

Cost Impact: By deploying right-sized models, enterprises typically reduce compute costs 40-60% while maintaining quality.

Pillar 2: Edge-First Architecture for Latency & Cost

The Edge Computing Advantage

Edge Deployment Technologies:

- NVIDIA Jetson Series - $200-2,000 one-time investment for local GPU compute

- TensorRT - Model optimization achieving 10-40x faster inference on edge devices

- ONNX Runtime - Cross-platform model execution for flexible deployment

- Docker containers - Standardized edge application deployment

Edge Architecture Pattern:

Hybrid Edge-Cloud Architecture

Real-World Example - Manufacturing Quality Control:

Pillar 3: Efficient Model Architecture & Optimization

Model Compression Techniques

Deploying full models is infrastructure waste. Advanced optimization techniques reduce model size 80-95% with minimal accuracy loss:

Quantization:

- INT8 quantization reduces model size 4x with <2% accuracy loss

- Mixed precision (FP16/FP32) provides 2x memory reduction

- TensorRT and TVM implementations achieve 10-40x inference speedup

- Cost impact: 4x smaller models = 4x cheaper inference

Pruning & Distillation:

- Knowledge distillation - train small student models from large teacher models

- Structured pruning - remove redundant layers and connections

- Lottery ticket hypothesis - identify minimal weight subsets for equivalent performance

- Typical result: 90% parameter reduction with 95%+ accuracy retention

Cost Impact: Optimized models reduce GPU requirements by 50-75%, translating to $40K-120K annual savings per inference workload.

Pillar 4: Efficient Data Processing Pipelines

Streaming vs. Batch Trade-offs

Most enterprises default to batch processing for infrastructure simplicity, but streaming architectures often deliver both better latency and lower costs:

Batch Processing Economics:

- GPU allocated 24/7 for periodic batch jobs

- Underutilized 80% of the time for latency-tolerant workloads

- Cost: $87,600 annually for compute that processes 2 hours of daily workload

Streaming Processing Economics:

- GPU scaled dynamically based on real-time demand

- Apache Kafka handles queuing and load distribution

- GPU spins down during idle periods

- Cost: $18,000-30,000 annually for equivalent processing volume

Implementation Stack:

- Apache Kafka for event streaming and queuing

- Apache Flink or Spark Streaming for real-time processing

- Redis for low-latency feature serving

- Kubernetes for dynamic GPU allocation

Cost Impact: Switching from batch to streaming typically reduces compute costs 60-75% while improving decision latency 80-95%.

The ROI Case Studies: From Theory to Production Savings

Case 1: Financial Services - Real-Time Fraud Detection

Initial Approach (Pilot):

- Cloud-based deployment on AWS with on-demand GPU instances

- Full 70B parameter model for fraud detection

- Monthly compute cost: $18,000

Optimized Approach (Production):

- Edge deployment on local Jetson clusters with cloud overflow

- Quantized 7B SLM model optimized for fraud classification

- Streaming data pipeline with Redis feature serving

- Monthly compute cost: $2,400

Annual Savings: $187,200

Implementation Timeline: 12 weeks

ROI: 340% in year one (savings exceed implementation cost)

Additional Benefits:

- Sub-10ms fraud detection latency (vs 2-5 second batch processing)

- Real-time customer experience improvement

- Regulatory compliance with on-premises processing for sensitive data

Case 2: Healthcare - Patient Demand Forecasting

Initial Approach:

- Weekly batch processing on 4x GPU cluster

- Full 13B language model for analyzing patient intake patterns

- Monthly infrastructure: $14,000

Optimized Approach:

- Real-time streaming pipeline with edge SLM models

- Event-driven inference triggered by new patient registrations

- Distributed Jetson deployment across 8 clinical sites

- Monthly infrastructure: $3,200

Annual Savings: $129,600

Implementation Timeline: 10 weeks

ROI: 285% in year one

Additional Benefits:

- Real-time staffing recommendations vs weekly batch updates

- Reduced emergency department wait times by 40%

- Improved patient satisfaction and outcomes

- HIPAA compliance through local processing

Case 3: Manufacturing - Equipment Maintenance Prediction

Initial Approach:

- Cloud-based ML pipeline with SageMaker

- Daily retraining of 30B prediction models

- Monthly cost: $22,000

Optimized Approach:

- Edge deployment with continuous learning on local Jetson devices

- Lightweight 7B models updated incrementally, not retrained from scratch

- Hybrid architecture for model updates via cloud

- Monthly cost: $4,100

Annual Savings: $215,000

Implementation Timeline: 14 weeks

ROI: 390% in year one

Additional Benefits:

- Real-time predictive maintenance (48-hour advance warning vs 7-day batch forecast)

- Reduction of unplanned downtime from 15% to 3%

- Equipment longevity improvement from prevented stress failures

- Supply chain resilience through local processing

Competitive Economics: Who Wins, Who Loses

The 2026 Competitive Divide

By end of 2026, enterprises will fall into two categories:

High-Cost AI Implementers (60% of enterprises):

- Still deploying full models to cloud infrastructure

- Batch processing for latency-tolerant workloads

- No edge deployment strategy

- Infrastructure costs: $100K-500K annually for modest AI initiatives

- Infrastructure consuming 15-25% of AI project budgets

- Unable to scale AI due to cost constraints

Cost-Optimized AI Leaders (15% of enterprises):

- Strategic model selection and right-sizing

- Edge-first hybrid architectures

- Real-time streaming data pipelines

- Infrastructure costs: $20K-80K annually for equivalent capability

- Infrastructure consuming 3-8% of AI project budgets

- Ability to scale AI to 5-10x more use cases with same budget

AI-Abstaining Organizations (25% of enterprises):

- Cost uncertainty prevents AI adoption

- No infrastructure modernization underway

- Risk of competitive obsolescence as AI becomes table-stakes

The Compliance & Risk Multiplier

Adding ESG and carbon regulatory pressure:

High-Cost Infrastructure:

- Carbon emissions: 50-100 tons CO2 annually per workload

- EU carbon tax exposure: €4,000-12,000 annually

- ESG rating penalties: 5-10% valuation impact for energy-intensive operations

- Total cost-of-ownership including carbon: $110K-525K annually

Optimized Infrastructure:

- Carbon emissions: 10-20 tons CO2 annually per workload

- EU carbon tax exposure: €800-2,400 annually

- ESG rating premiums: 2-5% valuation uplift for efficient operations

- Total cost-of-ownership including carbon incentives: $18K-80K annually

The Competitive Multiplier: High-cost operators face $92K-445K annual disadvantage from infrastructure inefficiency + regulatory carbon costs vs. optimized competitors

2026 Budget Planning: The Strategic Framework

Build vs. Buy Decision Matrix

For enterprises planning 2026 AI infrastructure budgets:

Strategic Recommendation for 2026:

- Start with cloud-based pilots (rapid time-to-value)

- Transition proven workloads to managed edge platforms (cost optimization)

- Keep 10-20% cloud capacity for surge and new experimentation

- Plan for geographic distribution by Q3 2026

Implementation Roadmap

Q1 2026: Assessment & Planning

- Current AI infrastructure audit

- Workload characterization (batch vs. real-time)

- Model optimization opportunity assessment

- ROI calculation for edge migration

Q2 2026: Pilot & Proof

- Deploy edge infrastructure in one geographic location

- Migrate 1-2 proven workloads to optimized architecture

- Measure latency, cost, and reliability improvements

- Gather operational learnings

Q3 2026: Scaled Deployment

- Expand edge infrastructure to 3-5 geographic locations

- Migrate 30-50% of suitable AI workloads

- Achieve 40-60% cost reduction on migrated workloads

- Plan for 2027 expansion

Q4 2026: Full Optimization

- Complete migration of suitable workloads

- Implement advanced optimization (quantization, distillation)

- Achieve mature hybrid architecture

- Plan for AI expansion in 2027

Why Companies Without Strategic Infrastructure Planning Lose

The Cost Escalation Trap

Without strategic infrastructure planning, companies follow this trajectory:

- Months 1-6: Initial Success - Cloud deployment works perfectly for pilots

- Months 6-12: Scaling Begins - Infrastructure costs escalate 3-5x faster than expected

- Months 12-18: Budget Crisis - AI infrastructure consuming 20-30% of AI budgets

- Months 18-24: Capability Freeze - No budget for new AI initiatives; existing projects cut corners

- Year 3+: Competitive Disadvantage - Competitors with cost-optimized infrastructure deploy 5-10x more AI use cases

The infrastructure-Capability Limitation

Without cost optimization:

- Can afford 10-15 AI projects at $120K-150K infrastructure cost each

- Competitors with optimized infrastructure afford 50-75 projects at $20K-30K cost each

- Effective AI capability gap: 5-7x disadvantage

This infrastructure cost difference explains why only 12% of enterprises achieve "AI leadership" status—most can't afford the infrastructure required for scaled deployment.

Why Fracto's Infrastructure-First Approach Transforms Economics

The critical difference between AI projects that scale and those that fail is rarely the model quality—it's infrastructure strategy. Fracto's fractional CTO approach addresses this by:

Strategic Infrastructure Audit: Identifying optimization opportunities worth $100K-500K annually through architectural analysis alone

Edge-First Architecture Design: Designing hybrid systems that reduce infrastructure costs 40-70% while improving latency 80-95%

Model Optimization Implementation: Deploying quantization, distillation, and pruning that achieve 4-10x compute efficiency improvements

Cost-to-Value Optimization: Ensuring infrastructure costs never exceed 8-12% of total AI project budgets

Ongoing Economics Management: Monitoring and optimizing costs continuously as workloads evolve

The organizations that win in the AI economy aren't necessarily those with the best models—they're the ones with the smartest infrastructure. Strategic infrastructure planning that costs $50K-100K in consulting delivers $200K-500K annual savings and enables 5-7x greater AI initiative scaling.

Ready to transform your AI infrastructure economics? Schedule a complimentary infrastructure cost optimization assessment with Fracto's specialists to identify your hidden savings opportunities and design a 2026 deployment strategy.

Bring your ideas to life— powered by AI.

Ready to streamline your tech org using AI? Our solutions enable you to assess, plan, implement, & move faster

Fracto by W3Blendr Ltd.

Athlone, Co. Westmeath,

Republic Of Ireland